Prerequisites

- Configure an AI Agent.

- Configure a speech provider in the Voice Preview Settings section of the Project settings.

- Voice Gateway set up for your organization.

- Edit access to the HTML of the page where you will embed the widget.

- For testing purposes, allow microphone access in your browser and, if needed, in your device settings.

Setup Overview

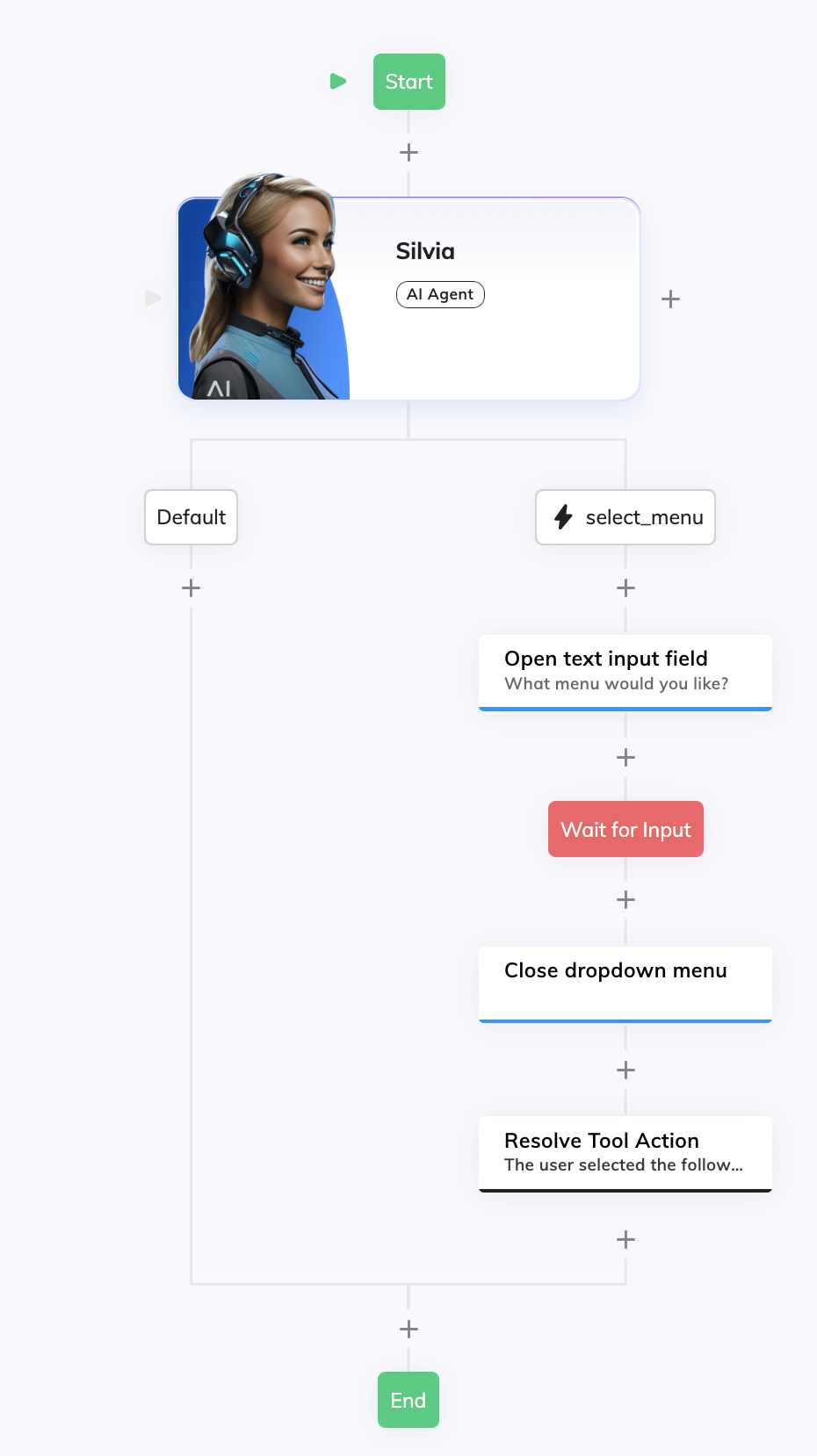

- Configure a Flow with an AI Agent Node and a Question Node. The Flow handles the conversation and opens the selector field on the Click To Call widget.

- Configure a Voice Gateway Endpoint. The Voice Gateway Endpoint connects the Flow to Voice Gateway and activates the Click To Call widget.

- Embed and test the widget. Add the widget script to your page and see the Click To Call widget working.

Set Up Click To Call Widget with Selector Field

Create a Flow

- Go to Build > Flows and click + New Flow.

-

On the New Flow panel, configure the following:

- Name — add a unique name, for example,

Click To Call Assistant. - (Optional) Description — add a relevant description, for example,

This Flow supports a Click To Call widget and a selector field.

- Name — add a unique name, for example,

Configure an AI Agent Node

-

In the Flow editor, add an AI Agent Node and configure the following:

- AI Agent — select the AI Agent you’ve previously created, for example,

Cantina Assistant. - Job Name —

Cantina Specialist. Save the Node.

- AI Agent — select the AI Agent you’ve previously created, for example,

- Next to the AI Agent Node, click + and select Tool.

-

Configure the Tool Node as follows:

- Tool ID — enter

select_menu. - Description — enter

Trigger this tool as soon as the customer needs help selecting a menu. Save the Node.

- Tool ID — enter

Configure a Say Node to Open the Selector

-

Below the

select_menuchild Node of the AI Agent Node, add a Say Node. -

Configure the Say Node as follows:

- In the Options section, add the following code in the Data field:

- In the Settings section, enter

Open selectorin the Label field. This label allows you to better identify the function of this Node.

- Add a Wait for Input Node to wait for the user to select or say an option.

Configure a Say Node to Close the Selector

-

Below the Wait for Input Node, add a Say Node and configure the following:

- In the Options section, add the following code in the Data field:

- (Optional) In the Settings section, enter

Close selectorin the Label field.

Complete Flow

Complete Flow

Configure a Voice Gateway Endpoint

Create a Voice Gateway Endpoint

- Go to Deploy > Endpoints.

-

Click + New Endpoint, select Voice Gateway and configure the following:

- Name — for example,

Click To Call Cantina Support. - Flow — Select the Flow you previously configured, in this example,

Click To Call Assistant.

- Name — for example,

- Click Save.

Generate the Click To Call Embedding Code

- In the Endpoint settings, find Click To Call Embedding HTML.

- Click the Set Up Click To Call Integration button in that field to generate the embedding code in the code editor. Hover over the code editor and click the Copy to clipboard button. You will use this code to embed the Click To Call widget in your website.

Enable and Configure the Click To Call Widget

-

In Click To Call Widget Settings:

- Enable Click To Call Widget — activate the toggle.

- AI Agent Name — Name shown on the widget, for example,

Cantina Support. - Tagline — Short text under the name, for example,

Let me know what you want to eat!. - (Optional) AI Agent Avatar Logo URL — Add a URL of the image shown on the widget, for example,

https://www.<domain>.com/logo.png. If empty, the Cognigy.AI logo is used. - (Optional) Theme — select the color theme of the widget.

- Save the Endpoint.

Embed and Test Widget

Embed the Widget in your Website

- Paste the code you copied previously from the Endpoint settings into your page’s

<body>or before</body>. The code looks similar to the following:

<ENDPOINT_URL> and <YOUR_ENDPOINT_CONFIG_TOKEN> are the URL and the Endpoint configuration token, respectively. For example, https://endpoint-dev.cognigy.ai/ab8b929039b427fed7ee84bb799acd7f254fc9254be27c87a78fc8a70fb048ec for the development environment or https://endpoint.cognigy.ai/ab8b929039b427fed7ee84bb799acd7f254fc9254be27c87a78fc8a70fb048ec for the production environment.- Make sure the actual Endpoint configuration URL is the same as the one you copied from Click To Call Embedding HTML in the Voice Gateway Endpoint.

- Edit the widget script to add the dropdown menu and events to open and close the selector when the Flow sends

openInput: trueorcloseInput: truein the Say Nodes. The following code is an example of how to do this:

Test the Widget

- Open the page in a browser, allow microphone access when prompted, and click

on the widget to start a voice conversation with your AI Agent.

- After the AI Agent greets you, say you want to select a menu. The selector field opens on the widget.

- Try answering the question using the dropdown (Mediterranean, Light, Comfort, Vegetarian) and by voice.