Prerequisites

- Configured Flow with Voice Gateway Nodes.

- To route your contact center or phone number to your Voice Gateway Endpoint, contact Cognigy support.

Generic Endpoint Settings

Learn about the generic Endpoint settings available for this Endpoint on the following pages:- Endpoints Overview

- Data Protection & Analytics

- Transformer Functions

- NLU Connectors

- Session Management

- Real-Time Translation Settings

- Copilot

Specific Endpoint Settings

Click To Call Widget Settings

Click To Call Widget Settings

The Voice Gateway Endpoint includes the Click To Call embedding HTML code and the Click To Call Widget Settings section for configuring Click To Call. Click To Call lets you embed a multimodal widget into your website, customer portal or mobile app so customers can be connected through one click to your voice AI Agent.

| Parameter | Type | Description |

|---|---|---|

| Enable Click To Call Widget | Toggle | Activates the Click To Call widget for the Endpoint. This setting is active by default. |

| AI Agent Name | String | Sets the name displayed on the Click To Call widget. |

| Tagline | String | Sets the subtext displayed under the AI Agent name. |

| AI Agent Avatar Logo URL | String | Sets the URL to the image displayed on the Click To Call widget. If left empty, the widget uses the Cognigy.AI logo. |

| Theme | String | The color theme of the Click To Call widget. You can select the following options:

|

| Display Live Transcription | Toggle | Displays conversation transcript in messages in the Click To Call widget. By default, the toggle is activated. |

| Enable Privacy Notice | Toggle | Displays a privacy notice at the beginning of each chat session to inform users about data handling practices and prompts the users to accept or decline the privacy policy. Accepting the privacy policy is required to start the conversation, ensuring compliance and user trust. If the user declines the privacy policy, the conversation doesn’t start. |

| Privacy Notice Title | String | This parameter is displayed only if you toggle on Enable Privacy Notice. Sets the heading that appears at the top of the privacy notice screen. By default, it is set to Privacy Notice. |

| Privacy Notice Text | String | This parameter is displayed only if you toggle on Enable Privacy Notice. Sets the message that users see in the privacy notice prompt. This parameter supports Markdown syntax. The default text is Please accept our privacy policy to start your chat. You can customize this message to provide more detailed information about your data collection, usage practices, and users’ rights, ensuring clarity and building trust from the outset of the chat interaction. |

| Submit Button | String | This parameter is displayed only if you toggle on Enable Privacy Notice. Sets the text displayed on the button users click to accept the Privacy Notice. By default, the button is labeled Submit. |

| Cancel Button Text | String | This parameter is displayed only if you toggle on Enable Privacy Notice. Sets the text displayed on the button users click to decline the Privacy Notice. By default, the button is labeled Cancel. |

| Privacy Policy Link Title | String | This parameter is displayed only if you toggle on Enable Privacy Notice. Sets the text that will be displayed as the title for the Privacy Policy link. The link title allows you to tailor the label of the link to better match the tone and context of your brand. |

| Privacy Policy Link URL | String | This parameter is displayed only if you toggle on Enable Privacy Notice. Sets the URL that directs users to your privacy policy. |

Customization

Customization

| Parameter | Type | Description |

|---|---|---|

| Live Transcription Background | Selector | Sets whether a background is displayed for the live transcription messages. This parameter is only available if you toggle on Display Live Transcription. You can select the following options:

|

| Transcription Background Color | Color picker | This parameter is only available if you select Custom in the Live Transcription Background selector. Sets the background color for the live transcription messages. By default, the background is white. |

| Demo Widget Page Background | Selector | Sets the parameter to fill the page background when using the Demo Widget page. You can use this field to test your website’s background against the Demo Widget. You can select the following options:

|

| Demo Widget Page Background Color | Color picker | This parameter is only available if you select Color in the Demo Widget Page Background selector. Sets the background color for the Demo Widget. By default, the background is white. |

| Demo Widget Page Background Image URL | String | This parameter is only available if you select Image URL in the Demo Widget Page Background selector. Sets the URL to the image displayed as the background for the Demo Widget. |

| Center Click To Call Widget | Selector | Sets the parameter to position the Click To Call widget on the page. You can select the following options:

|

Generic Settings

Generic Settings

These settings will be valid for every session of this Endpoint.

| Parameter | Type | Description |

|---|---|---|

| Prosody Settings | Toggle | If the parameter is enabled, this configuration will be used to specify changes to speed, pitch, and volume for the text-to-speech output. The Set Session Config or Say Nodes with Activity Parameters enabled can override the Prosody Settings option configured within the Endpoint. |

| Output Speed | Number | This parameter is active only when the Prosody Settings toggle is enabled. The change in the speaking rate for the contained text in percentage. Value range: -100 to 200. |

| Output Pitch | Number | This parameter is active only when Prosody Settings toggle is enabled. The baseline pitch for the contained text in percentage. Value range: -100 to 200. |

| Output Volume | Number | This parameter is active only when Prosody Settings toggle is enabled. The volume for the contained text in percentage. Value range: 10 to 200. |

| Show Best Transcripts Only | Toggle | If the parameter is enabled, only the best transcript in the input object is accessible, instead of all variations. |

| Enable Call Event: Call in Progress | Toggle | If the parameter is enabled, the Call in Progress toggle appears in the Call Events section. |

As with all Speech Provider settings, there are certain differences in the use of this feature. Refer to your Speech Provider’s documentation first to understand which values to add. An example would be the difference between Microsoft Azure and Google. For Microsoft Azure, users need to specify the

% by which they would like to increase or decrease from the default settings. For instance, an example value could be Output Speed -20%, indicating a 20% reduction from the default output speed of 100%, resulting in a new output speed of 80%. To achieve the same effect with Google, users need to add the final output speed in % to the Prosody Settings. For example, Output Speed 80% directly displays the new output speed, as with Google; the final output speed is directly added to the settings.AudioCodes Compatibility Mode

AudioCodes Compatibility Mode

Allows using both AudioCodes Nodes and Voice Gateway Nodes simultaneously in a Flow.

When this mode is enabled, the system treats Flows with AudioCodes Nodes as if they were Flows with Voice Gateway Nodes.

This mode helps ensure that voice AI Agents operate smoothly without interruptions during the transition from AudioCodes to Cognigy Voice Gateway.To activate the AudioCodes Compatibility Mode section, add the

FEATURE_VG_AC_COMPATIBILITY_MODE feature flag to the Cognigy.AI values.yaml file.For more information, read Migration from AudioCodes to Voice Gateway.Call Events

Call Events

Allows activating call events for a Flow.

Select a call event from the Voice Gateway Events list.

This event will trigger the action.If you have configured the same call event in both the Endpoint and the Lookup Node, the Endpoint settings will overwrite the Node settings.Call Event Settings

As with all other Endpoint settings, you can’t test the Call Events settings within the Interaction Panel.

| Parameter | Type | Description |

|---|---|---|

| Action | Dropdown | Select the action to be performed when the call event is detected: - Inject into current Flow — inject the defined text and data payload into the current flow. - Execute Flow — trigger a selected Flow when the call event is detected. - None — no action will be taken when the call event is detected. - Transfer — transfer a call in case TRANSFER_DIAL_ERROR or TRANSFER_REFER_ERROR call events occur. This action for call events is configured similarly to Call Failover. |

| Text Payload | String | Enter the text that will be sent to your Flow. The parameter is available only for the Inject into current Flow action. |

| Data Payload | JSON | Provide the data that will be sent into your Flow in JSON format. The parameter is available only for the Inject into current Flow action. |

| Execute Flow | Dropdown | Execute the selected Flow. The parameter is available only for the Execute Flow action. Note that you can’t use Go To or Execute Flow Nodes in the executed Flow. When the Flow is fully processed, with all Nodes from Start to End executed, any dependent Flows won’t be triggered. |

Call Failover

Call Failover

The Call Failover section is intended to handle runtime and speech provider authentication errors that occur on the Cognigy.AI side during the execution of a Flow.

- If you use a SaaS Cognigy installation, contact the support team to activate this feature.

- If you use an on-premises Cognigy installation, activate this feature by adding

FEATURE_ENABLE_ENDPOINT_CALL_FAILOVERinvalues.yaml.

For Voice Gateway 2025.19 and later, the Anchor Media parameter has been renamed to Media Path and includes more options. The previously available options correspond to:

- Full Media — the toggled on Anchor Media parameter in earlier versions.

- Partial Media — the toggled off Anchor Media parameter in earlier versions.

FEATURE_DISABLE_VG_MEDIA_PATH, with a default value of false. It is available only in environments where the feature flag is set to false.| Parameter | Type | Description | Transfer Type |

|---|---|---|---|

| Flow execution failover enabled | Toggle | If enabled, the configuration below will be used to perform a transfer in case of a runtime error. If the Speech provider failover toggle is also enabled, the configuration below applies to cases of runtime errors or failed speech provider authentication. | - |

| Speech provider failover enabled | Toggle | If enabled, the configuration below will be used to perform a transfer in case the speech provider authentication fails. If the Flow execution failover toggle is also enabled, the configuration below applies to cases of runtime errors or failed speech provider authentication. | - |

| Transfer Type | Dropdown | There are two transfer types: - Refer — forwarding an existing call. - Dial — creating a new outgoing call. If you want to use this type and still have the old Node version, add a new Voice Gateway Transfer Node in the Flow Editor and manually transfer the required settings from the old Node. | - |

| Reason | CognigyScript | The reason for the handover. It is shown in Voice Gateway logs. | All |

| Target | CognigyScript | E.164 syntax or a SIP URI are supported. | All |

| Caller ID | Number | The caller ID. Some carriers, like Twilio, require a registered number for outgoing calls. | Dial |

| Dial Music | URL | Custom audio or ring-back which plays to the caller while the outbound call is ringing. Only .wav or .mp3 files are supported. The URL doesn’t need to include the .mp3 or .wav extension. For example, https://abc.xyz/music.mp3 or https://audio.jukehost.co.uk/N5pnlULbup8KabGRE7dsGwHTeIZAwWdr. | Dial |

| Timeout | Number | The amount of time (in seconds) that the AI Agent will ring before a no-answer timeout. The default value is 60 seconds. | Dial |

| Enable Duration Limit for Transfer | Toggle | Automatically disconnects transferred calls after a set time to prevent excessive call durations. This setting is useful in the following cases: - Limit call duration by automatically ending transferred calls that exceed the predefined time, helping manage system performance. - Free up resources by disconnecting calls if the caller forgets to hang up, making lines available for other callers. - Reduce costs by preventing unnecessary charges from long, unattended calls. | Dial |

| Duration Limit | Number | This parameter is active only when Enable Duration Limit for Transfer is selected. Set the maximum duration in seconds. Transferred calls end automatically after this time, even if the caller is on the line. The default value is 600. | Dial |

| Enable Copilot | Toggle | Creates the UUIValue which will be sent to the Contact Center through SIP Headers. Will collect information from the Transcription Webhook field as well as from the VG Endpoint Copilot Config field to create the UUIValue. This setting requires a configured Voice Copilot Endpoint. | Dial |

| Failover Transcribe Enabled | Toggle | If enabled, transcriptions will be attempted in case of a failed call transfer. | Dial |

| Media Path | Dropdown | Controls the routing of RTP traffic through a media platform, such as FreeSwitch, for monitoring, transcoding, and security purposes. You can also change this value during an active call using the updateCall API. Select one of the following options:

| Dial |

| STT Vendor | Dropdown | Defines the STT vendor. You can select a custom vendor. The Default option’s behavior in the Set Session Config Node depends on whether you select this option in the first or in a subsequent Node of this type:

| Dial |

| STT Language | Dropdown | Select the desired STT Language. For custom languages, use the following format: de-DE, fr-FR, en-US. | Dial |

| Disable STT Punctuation | Toggle | This parameter is active only when Google or Deepgram is selected from the STT Vendor list. Prevents the transcription returned to the AI Agent from including punctuation marks. | Dial |

| Deepgram Model | Dropdown | This parameter is active only when Deepgram is selected from the STT Vendor list. Choose a model for processing submitted audio. Each model is associated with a tier. Ensure that the selected tier is available for the chosen STT language. For detailed information about Deepgram models, refer to the Deepgram documentation. | Dial |

| Endpointing | Toggle | This parameter is active only if Deepgram or Deepgram Flux is selected from the STT Vendor list:

| Dial |

| Endpointing Time | Number | This parameter is active only when Deepgram is selected from the STT Vendor list and the Endpointing toggle is enabled. Customize the duration (in milliseconds) for detecting the end of speech. The default is 10 milliseconds of silence. After detecting silence, the system waits until either the speaker resumes or the required silence duration is reached. Once one of these conditions is met, the transcript is sent with speech_final set to true. | Dial |

| Smart Formatting | Toggle | This parameter is active only when Deepgram is selected from the STT Vendor list. Deepgram’s Smart Format feature applies additional formatting to transcripts to optimize them for human readability. Smart Format capabilities vary between models. When Smart Formatting is turned on, Deepgram will always apply the best-available formatting for your chosen model, tier, and language combination. For detailed examples, refer to the Deepgram documentation. Note that when Smart Formatting is turned on, punctuation will be activated, even if you have the Disable STT Punctuation setting enabled. | Dial |

| Google Model | Dropdown | This parameter is active only when Google is selected from the STT Vendor list. Uses one of Google Cloud Speech-to-Text transcription models. The default value is latest_short. For a detailed list of Google models, refer to the Transcription models section in the Google Documentation. Keep in mind that the default value is a Google model type that can be used if other models don’t suit your use case. | Dial |

| End of Turn Threshold | Slider | This parameter is active only if Deepgram Flux is selected from the STT Vendor list. Defines the confidence level for detecting when a user’s speech ends. Higher values improve accuracy but may increase latency. The default value is 0.7, meaning the system waits until it is 70% confident that the user has finished speaking before finalizing the transcript. | Dial |

| End of Turn Timeout in Milliseconds | Number | This parameter is active only if Deepgram Flux is selected from the STT Vendor list. Defines the maximum number of milliseconds to wait before finalizing a turn. The timeout acts as a backup if the user stops speaking unexpectedly. The default value is 5000 (5 seconds), meaning the turn will be closed after 5 seconds of silence. | Dial |

| Transcription Webhook | CognigyScript | The webhook is triggered with an HTTP POST whenever an interim or final transcription is received. If the STT Vendor and STT Language fields are empty, the system will use the default STT from the Set Session Config Node (if it exists) or from the Voice Gateway Self-Service Portal. The parameter supports CognigyScript, allowing it to accept dynamic content. For example, you can specify the URL as follows: https://test-hook.com?contact={{ci.contact_name}}. Note that if the Voice Copilot Endpoint is inactive, you can use any Webhook URL to receive voice call transcripts. However, when the Voice Copilot Endpoint is enabled, ensure that the specified Webhook URL is associated with it for processing. | Dial |

| STT Label | CognigyScript | The alternative name of the vendor is the one you specify in the Voice Gateway Self-Service Portal. If you have created multiple speech services from the same vendor, use the label to specify which service to use. | Dial |

| Audio Stream Selection | Dropdown | Select the source of the audio stream: - Caller/Called - both the incoming and outgoing audio streams of the caller and the called party. - Caller - the incoming and outgoing audio stream of the caller. - Called - the incoming and outgoing audio stream of the called party. Ensure that the selected audio stream matches the language specified for transcription. If no audio stream is provided, the system will use the one set in the beginning, which should also match the language specified for transcription. | Dial |

| Referred By | String | This parameter is optional. This setting allows you to change the original Referred By value, which can be a SIP URI or a user identifier such as a phone number. To define the Referred By value, you can use the following patterns: - SIP URI - sip:[referred-by]@custom.domain.com. In this case, the entire SIP URI will be sent as the Referred-By header. Example: "Referred-by": "sip:CognigyOutbound@custom.domain.com".- User Identifier - sip:[referred-by]@[SIP FROM Domain from carrier config]. Example, "Referred-By": "sip:CognigyOutbound@sip.cognigy.ai". | Refer |

| Custom Transfer SIP Headers | Toggle | Data that needs to be sent as SIP headers in the generated SIP message. | All |

Changing the Media Path during an Active Call

Besides setting the media path in the Flow, you can also change the media path during an active transferred call using the Voice Gateway API.This approach is useful for PCI compliance. For example, when a caller shares sensitive payment information, Voice Gateway, as an intermediate system, must be removed from the media stream. Audio is then routed directly between the caller and the contact center, bypassing Voice Gateway, reducing PCI data exposure and helping protect sensitive data.To remove Voice Gateway from the media path, send the PUT /Accounts//Calls/ API request with{ "media_path": "noMedia" } from the contact center to Voice Gateway.Capture the call SID before the transfer takes place. During a transfer, a new call SID is created for the new call leg, but the original call SID remains unchanged. Pass the original call SID along during the transfer, for example, in SIP headers. This way, the contact center can reference the original call when triggering the API request at the correct moment.Handover Settings

Handover Settings

You can use any handover provider within the Voice Gateway integration.

The Handover Settings enable your voice agent to route calls to the contact center, ensuring efficient communication and swift access to human assistance.In contrast to chatting, communication between actors during a voice call occurs using speech recognition technologies (text-to-speech and speech-to-text).

Using speech-to-text technology, the voice agent sends the conversation script from the end user to the human agent.

Once the script is received, the human agent can respond using text.

After that, the voice agent converts the human agent’s message to speech using text-to-speech technology.

Finally, the converted message is sent to the end user.ConfigurationTo activate the Handover Settings, you need to add a Handover to Human Agent Node in your voice Flow. This Node transfers the conversation from the voice agent to the human agent.In this example, an end user seeks billing assistance and interacts with agents in a customer service system via text-to-speech and speech-to-text technologies.End User: Calls the customer service line. Speaks to the voice agent. Example:

Voice Agent: Transcribes user’s speech into text using speech-to-text technology. Example:

Voice Agent: Determines the need for human assistance. Initiates handover to the human agent via handover provider.

Human Agent: Receives a conversation script containing user’s query.

Human Agent: Types response on interface. Example:

Voice Agent: Receives human agent’s typed response. Converts the text message into speech using text-to-speech technology.

End User: Hears human agent’s response spoken by voice agent. Example:

End User / Human Agent: The conversation between the end user and the human agent continues until the issue is resolved or the interaction is concluded.Multilingual SupportWithin the Handover Settings, you can use translation into other languages. Use the translation feature in the Handover Settings to overcome language barriers between end users and support staff. This feature is particularly useful in situations where there are no support staff proficient in the end user’s language, and urgent issue resolution is necessary.For example, if a user communicates in German, the voice agent responds accordingly. However, after transferring the call to the contact center, the dialogue is translated into English. The human agent responds in English, and the response is translated into German for the end user via text-to-speech technology. This method ensures seamless communication despite language differences.Note that depending on the language and provider selected, translations may experience delays while they reach the contact center. If the speech-to-text fails to recognize speech for any reason, the message won’t appear on the contact center’s side. Plan for such scenarios within your Flow and configure the voice agent’s behavior accordingly. This approach will ensure that the end user is aware that their message may not be delivered. You can achieve this by adjusting timeout settings and error handling policies.To set up a multilingual Flow for the handover use case, follow these steps:

Voice Agent: Determines the language and answers using the same language. Example:

Voice Agent: Transcribes user’s speech into text using speech-to-text technology. Example:

Voice Agent: Determines the need for human assistance and initiates the handover to the human agent via the handover provider.

Human Agent: Receives a conversation script containing user’s query.

Human Agent: Types response on interface. Example:

Voice Agent: Receives human agent’s typed response. Converts the text message into speech using text-to-speech technology.

End User: Hears human agent’s response spoken by voice agent. Example:

End User/Human Agent: The conversation between the end user and the human agent continues until the issue is resolved or the interaction is concluded.

Hello, I need assistance with my billing inquiry.Voice Agent: Transcribes user’s speech into text using speech-to-text technology. Example:

Hello, I need assistance with my billing inquiry.Voice Agent: Determines the need for human assistance. Initiates handover to the human agent via handover provider.

Human Agent: Receives a conversation script containing user’s query.

Human Agent: Types response on interface. Example:

Hello, thank you for reaching out. Let me check your billing details.Voice Agent: Receives human agent’s typed response. Converts the text message into speech using text-to-speech technology.

End User: Hears human agent’s response spoken by voice agent. Example:

Hello, thank you for reaching out. Let me check your billing details.End User / Human Agent: The conversation between the end user and the human agent continues until the issue is resolved or the interaction is concluded.Multilingual SupportWithin the Handover Settings, you can use translation into other languages. Use the translation feature in the Handover Settings to overcome language barriers between end users and support staff. This feature is particularly useful in situations where there are no support staff proficient in the end user’s language, and urgent issue resolution is necessary.For example, if a user communicates in German, the voice agent responds accordingly. However, after transferring the call to the contact center, the dialogue is translated into English. The human agent responds in English, and the response is translated into German for the end user via text-to-speech technology. This method ensures seamless communication despite language differences.Note that depending on the language and provider selected, translations may experience delays while they reach the contact center. If the speech-to-text fails to recognize speech for any reason, the message won’t appear on the contact center’s side. Plan for such scenarios within your Flow and configure the voice agent’s behavior accordingly. This approach will ensure that the end user is aware that their message may not be delivered. You can achieve this by adjusting timeout settings and error handling policies.To set up a multilingual Flow for the handover use case, follow these steps:

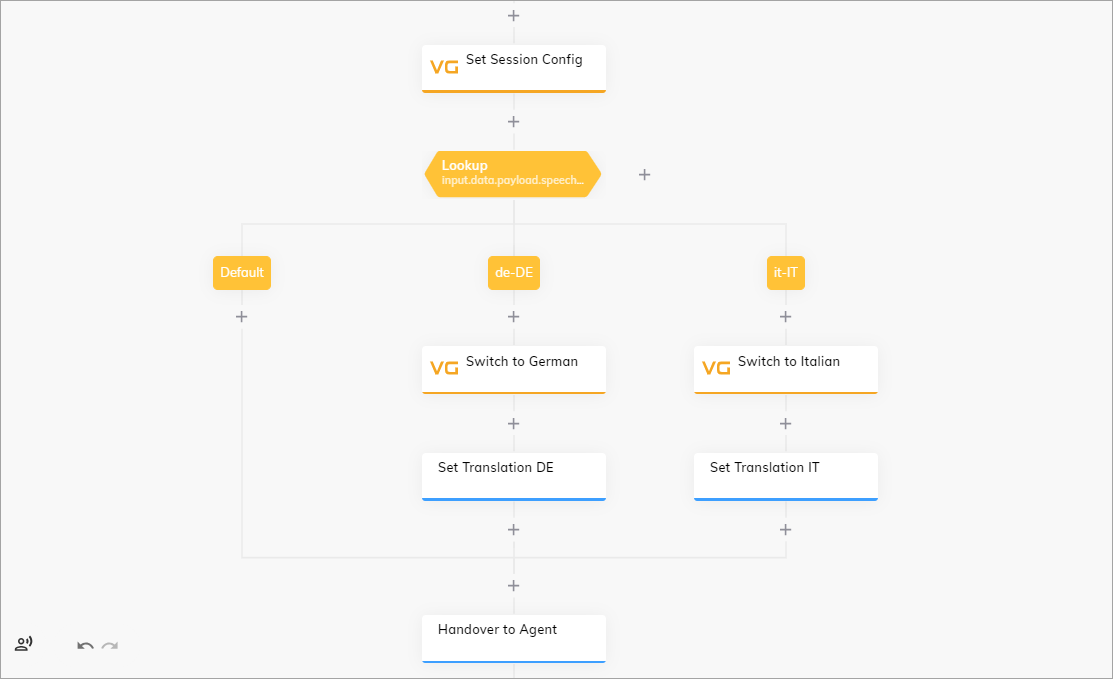

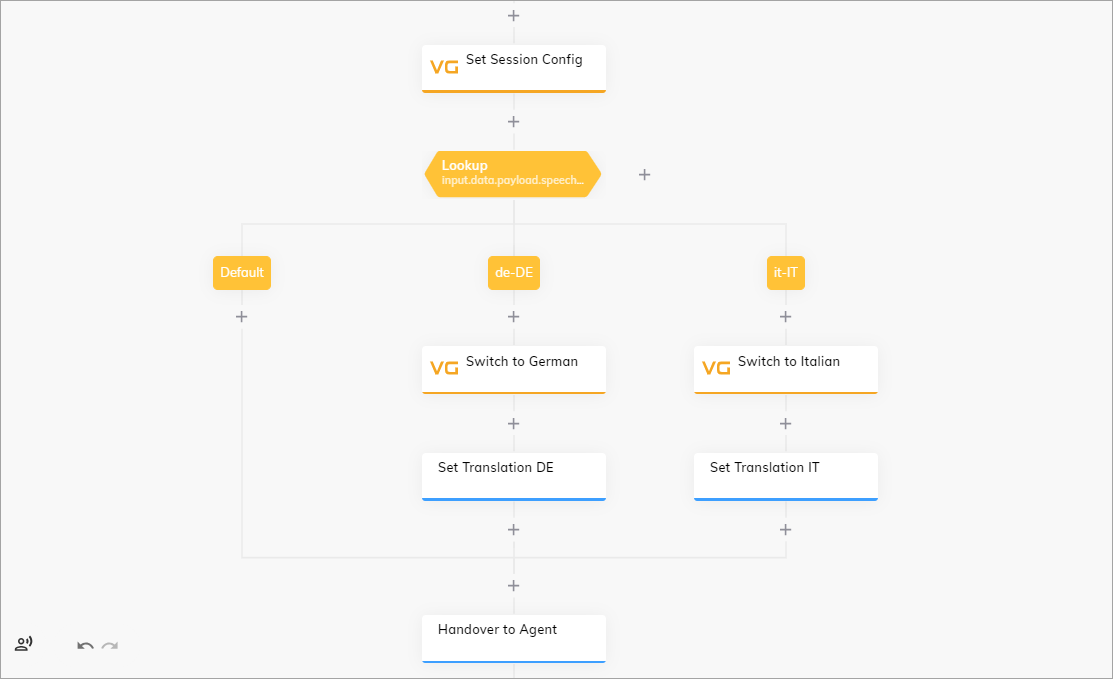

- In your voice Flow, navigate to the Set Session Config Node.

- In the Node editor, go to the Recognizer STT section and activate the Recognize Language toggle.

- From the Alternative Language list, select the additional languages, for example,

GermanandItalian. - Below the Set Session Config Node, add a Lookup Node.

- In the parent Lookup Node, select CognigyScript from the Type list and specify

input.data.payload.speech.language_codein the Operator field. - In the case Nodes of the Lookup Node, specify the language codes, for example,

de-DE,it-IT. - Below each case Node, add the second Set Session Config Nodes.

- Configure the TTS and STT settings for each of the Set Session Config Nodes. This means that one Node should have German language selected, while the other should have Italian. These Nodes will manage the voice agent’s communication in the end user’s language.

- Below each Set Session Config Node, add the Set Translation Nodes. These Nodes will perform translation of a text from the end user’s language to the human agent’s language.

- In the Set Translation Node, activate the Translation Enabled toggle.

- In the User Input Language field, specify the language of the user input, for example,

de,it. - In the Flow Language field, specify the language to translate to, for example,

en. - At the end of the Flow, place the Handover to Human Agent Node.

Hallo, ich brauche Hilfe bei meiner Rechnungsanfrage.Voice Agent: Determines the language and answers using the same language. Example:

Danke für die Informationen. Ich werde Sie an einen menschlichen Agenten weiterleiten, der Ihnen weiterhelfen kann.Voice Agent: Transcribes user’s speech into text using speech-to-text technology. Example:

Hello, I need assistance with my billing inquiry.Voice Agent: Determines the need for human assistance and initiates the handover to the human agent via the handover provider.

Human Agent: Receives a conversation script containing user’s query.

Human Agent: Types response on interface. Example:

I've found the issue. It seems there was an error in the billing statement. I'll correct it for you.Voice Agent: Receives human agent’s typed response. Converts the text message into speech using text-to-speech technology.

End User: Hears human agent’s response spoken by voice agent. Example:

Ich habe das Problem gefunden. Es scheint, dass es einen Fehler in der Rechnungsstellung gab. Ich werde es für dich korrigieren.End User/Human Agent: The conversation between the end user and the human agent continues until the issue is resolved or the interaction is concluded.

Additional Information

SIP Headers

SIP Headers

The SIP headers, including any custom headers, are available within the Input object.

You can find them in

input.data or input.data.payload.sip.headers.Call Metadata

Call Metadata

Voice Gateway identifies information about the caller and adds it to the Input object as

input.data.numberMetaData.| Parameter | Type | Description | Example |

|---|---|---|---|

| number | String | The caller’s phone number, including country code. The phone number has E.164 format. | +4921154591991 |

| country | String | The 2-character country code. | DE |

| countryCallingCode | String | The country calling code associated with the phone number. | 49 |

| nationalNumber | String | The national number part of the phone number. It excludes the country code and any leading zero. | 21154591991 |

| ext | String | The extension number, if present. It consists of a numerical sequence, such as 123 or 568, used in PBX systems for streamlined internal phone communications within organizations. Users can dial these numbers for internal calls without needing to dial the full external phone number. | 123 |

| valid | Boolean | The validity of the phone number. | true |

| type | String | The phone number’s classification. Can be any of: - PREMIUM_RATE — a number associated with premium rate services, incurring higher charges. - TOLL_FREE — a toll-free number where the recipient pays for the call. - SHARED_COST — a number where call costs are shared between the caller and the recipient. - VOIP — a number associated with Voice over Internet Protocol (VoIP) services. - PERSONAL_NUMBER — a non-geographic number typically associated with individuals. - PAGER — a number associated with a pager device for receiving short messages. - UAN — a universal Access Number for nationwide business services. - VOICEMAIL — a number used for accessing voicemail services. - FIXED_LINE_OR_MOBILE — a fixed-line or mobile number. - FIXED_LINE — a traditional landline phone number. - MOBILE — a number associated with a mobile/cellular device. | FIXED_LINE |

| uri | String | The URI associated with the phone number, if applicable. | tel:+4921154591991 |

The data in

input.data.numberMetaData is available as Tokens inside Cognigy Text fields.